Our eyes are never still. Instead, fixational eye movements — small, continuous movements that we are not aware of making — keep them moving even between our voluntary gaze shifts. A new study suggests that involuntary fixational eye movements are more important for vision than previously believed.

The ability of humans to perceive the world as stable while having eyes that are constantly moving has long been a puzzle for scientists. According to earlier studies, the human visual system creates a stable world image between voluntary gaze shifts by relying solely on sensory information from fixational eye movements.

However, the new study suggests that there might be additional contributing factors. According to the researchers, fixational eye movements provide the visual system with sensory information as well as information about the motor behaviour involved in those movements.

Tracking Movements and Gaze Shifts

Even though humans are unaware of moving their eyes, the human brain precisely understands how the eyes move. Using this knowledge, our brains can infer spatial relationships and perceive the world as stable rather than blurred.

Contrary to what is generally believed, the research’s findings show that spatial representations — the locations of objects in relation to one another — are based on a combination of sensory and motor activity from both voluntary and involuntary eye movements.

“It was already clear that the visual system uses sensory and motor knowledge from large voluntary movements, either gaze shifts we perform to look at different parts of a scene, or tracking movements for following moving objects. But scientists didn’t think smaller, involuntary movements like fixational eye movements could be used to convey information through motor signals,”

said Michele Rucci, a professor in the brain and cognitive sciences department and Center for Visual Science at the University of Rochester.

Eye Motor Activity

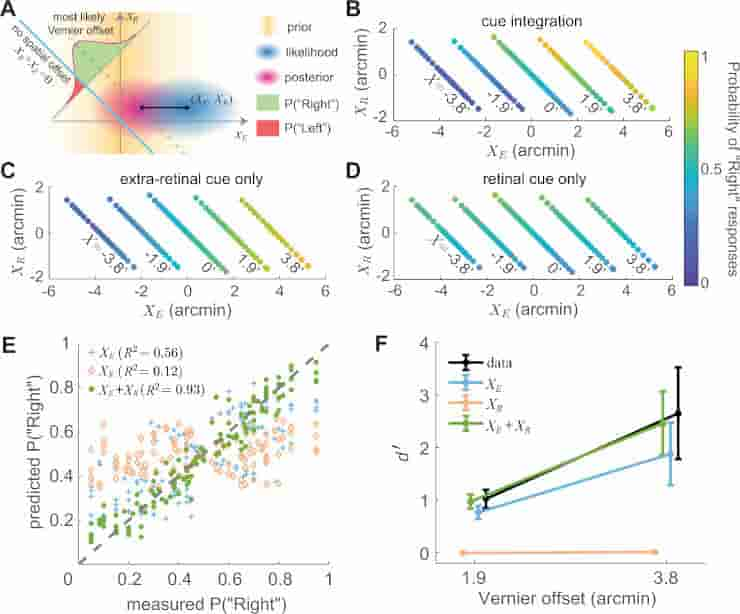

A An ideal observer model that combines retinal (xR) and extraretinal (xE) signals. The model assumes sensory measurements to be corrupted by unbiased additive Gaussian noise (standard deviations σR and σE) and applies a uniform prior to xR and a zero-mean Gaussian prior to xE (σ=2‾‾‾‾‾√

, where T is the ISI), the latter based on the assumption that ocular drift resembles Brownian motion. In each trial, the likelihood of any given combination (XE, XR) is first converted into a joint posterior probability distribution and then integrated on the −45∘ line xE + xR = X (dashed line) to evaluate the probability of any given Vernier offset X. The left/right perceptual report in a trial is determined by which side of the zero-offset line (cyan line) gives higher probability. B–D Response patterns are best predicted by the model when combining both cues (B); the model (C) that only uses extraretinal information does not account for the dependence on XR, and the model (D) that uses only retinal information fits poorly. Graphic conventions are as in Fig. 2D. E, F Comparison of experimental data and model predictions of responses and d′

: E Probability of responding “Right” for various combinations of XR and XE (the same data points as in B–D). F Average performance measured as d′

(N = 7 subjects). Error bars represent ± one SEM across subjects. The cue integration model predicts both subject responses and overall performance with significantly greater accuracy than the single-cue models.

Credit: Nat Commun 14, 269 (2023) CC-BY

Instead, the research demonstrates that the visual system constantly monitors motor activity even when people think they are keeping a steady gaze. The study also demonstrates that motor behaviour significantly impacts incoming sensory signals like other senses, such as touch and smell, do.

The findings have significant ramifications for future research on visual perception and will aid in our understanding of abnormal eye movement-related visual impairments.

“Our study unveils that involuntary eye movements, which are widely discarded as motor noise, make major contributions to spatial representations of the world. As we show, studying spatial representations without considering motor activity, as is often done in current neuroscience, is severely limiting,”

said first author Zhetuo Zhao, a PhD student in Rucci’s lab.

Visual Fixation

Fixational eye movement, which the nervous system performs to maintain visibility, continuously stimulates neurons in the early visual areas of the brain responding to transient stimuli.

Saccades normally alternate with visual fixations during eye movement, with smooth pursuit being the notable exception and being controlled by a different neural substrate that appears to have evolved for hunting prey.

The term “fixation” can be used to describe both the act of fixating and the location of focus in time and space. Fixation is the period of time between any two saccades when the eyes are essentially stationary and all visual information is processed. Perceptions frequently vanish quickly without retinal jitter, a laboratory condition known as retinal stabilization.

References:

- Zhao, Z., Ahissar, E., Victor, J.D. et al. Inferring visual space from ultra-fine extra-retinal knowledge of gaze position. Nat Commun 14, 269 (2023).

- Alexander, R. G.; Martinez-Conde, S. (2019). Fixational Eye Movements. Eye Movement Research. Studies in Neuroscience, Psychology and Behavioral Economics. doi:10.1007/978-3-030-20085-5_3